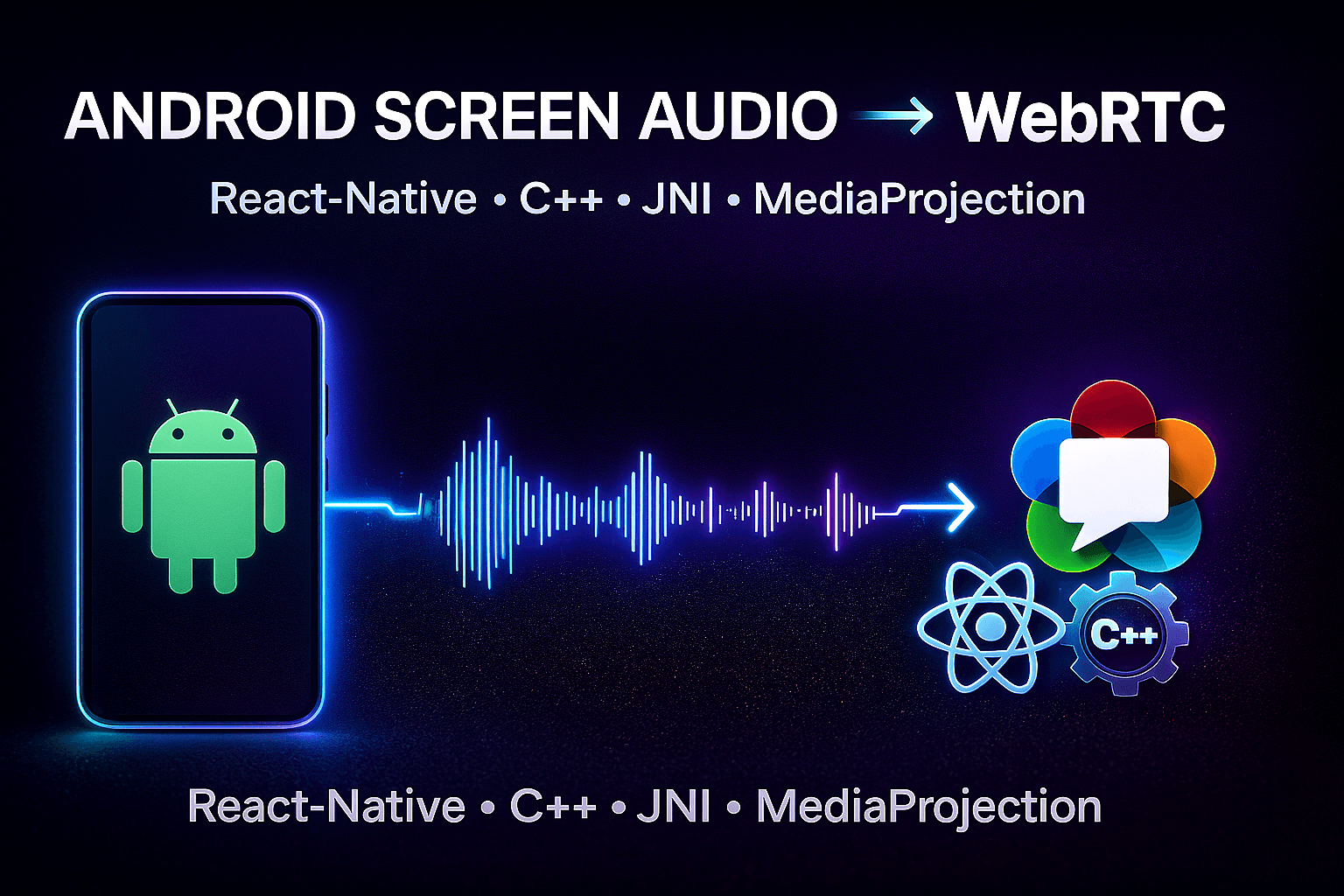

The Missing Guide: Android Screen Audio Streaming with WebRTC (React-Native, JNI, C++ ADM, WebRTC Build)

Developer building hybrid apps & web platforms with React ⚡ | Exploring robotics, AI, and multiplayer tech 🤖 | Sharing what I learn while creating innovative

Status: Work in Progress

Last Updated: 26-11-2025

There is no docs or API available for webrtc_android source code, that’s why it took me months to research. Still searching across the internet to send custom AudioRecord data samples to the native webrtc pipeline.

Around a month ago, I had to build a simple react-native application to transmit the audio capturing while recording the screen to the webrtc in LAN only. I should be fairly simple, and my goal was to share the live screen audio capture to play on all other devices connected to it in realtime over LAN using webrtc.

But it was not going to be good. In react-native-webrtc the navigator.mediaDevices.getDisplayMedia() API only gives VideoTrack of the screen video capturing, not the AudioTrack of the screen audio capturing. So, I tried diffrent libraries; But it is not that simple even if you get the Audio it is not giving you the AudioTrack, means what the webrtc wants. So, I opened the source code of react-native-webrtc, there is no screen audio capturing service used, means I can’t send screen audio data through react-native-webrtc.

While searching how other companies like Discord, AudioRelay, & most important AntMedia are able to share audio with webrtc on android, where AntMedia have a React-Native-WebRTC-SDK where I found something related to webrtc as they build the webrtc custom build and copied the org/webrtc to their application and also the libjingle_peerconnection_so.so. But still find nothing there right for me. And I am sure that they all building custom cpp implementation of webrtc and AudioPlaybackCaptureConfig.

Then, I thought to implement my own track to the react-native-webrtc by captuing audio from the screen and make the Java-Typescript API for react-native. First we have to initiate the screen recording and ask permission using the MediaProjection Android API and using the AudioPlaybackCapture API we can capture screen audio which depends on MediaProjection token while getting the configuration AudioPlaybackCaptureConfiguration. And yes, I am able to capture the audio and getting it in AudioRecord class, from where I am getting the audio PCM samples to do whatever I want.

Now I am creating a method, createScreenAudioTrack to create a track inside the react-native-webrtc library, And yes this is working, we are getting a new AudioTrack type track with VideoTrack in react-native code. But we can't directly pass audio data received from the AudioPlayBackConfiguration and also the AudioPlayBackConfiguration is the region why we need the mediaProjection Intent. I have the incomming audio now and the track, But to send data to the webrtc track, it is a bit difficult, because we can only do by creating a new AudioDeviceModule specifically for the ScreenCaptureAudio. But it will collide with the previous MicrophoneAudio Module which is by default PCM for the webrtc. which will remove the microphone audio, we will not get any audio in the microphone track then. But first let's see even I can create a AudioDeviceModule or not.

AudioPlaybackCaptureConfiguration config = new AudioPlaybackCaptureConfiguration.Builder(mediaProjection)

.addMatchingUsage(AudioAttributes.USAGE_MEDIA)

.build();

AudioFormat format = new AudioFormat.Builder()

.setEncoding(AudioFormat.ENCODING_PCM_16BIT)

.setSampleRate(48000)

.setChannelMask(AudioFormat.CHANNEL_IN_MONO)

.build();

AudioRecord ar = new AudioRecord.Builder()

.setAudioFormat(format)

.setBufferSizeInBytes(bufferSize)

.setAudioPlaybackCaptureConfig(config)

.build();

byte[] buf = new byte[bufferSize];

int r = ar.read(buf, 0, buf.length);

I can’t create a AudioDeviceModule simply in Java. I have to create it in native C++ and use the JNI bridge to talk between Java & C++ native. So, I created a simple C++ Module in android and used JNI bridge to call some cpp methods and get data in return. Now it’s time to create custom ADM (AudioDeviceModule) in cpp for webrtc. But for that I have to compile whole chromium webrtc source code for android cpp and then link libraries and directories to the CMake file of the android. First I have to install chromium depot_tools to use tools like gclient, fetch, git-cl to fetch chromium webrtc repositories.

extern "C" JNIEXPORT void JNICALL

Java_com_example_ScreenAudio_nativeFeedFrame(JNIEnv* env, jobject thiz, jbyteArray data) {

jbyte* samples = env->GetByteArrayElements(data, NULL);

env->ReleaseByteArrayElements(data, samples, 0);

}

Followed this documentation to compile webrtc source code on linux_x86 for webrtc_android which almost took a whole day to clone, sync & compile:

https://webrtc.github.io/webrtc-org/native-code/android

While few arguments are missing in the documentation while building for android, which I understand later on. WebRTC android build setup is ready now, I have to copy the so files in libs folder and the webrtc build code inside the cpp/webrtc/include and link the directories and files using the CMake configuration.

For anyone interested, I keep a public repo with my WebRTC C++/JNI experiments here: https://github.com/adityasharma-tech/webrtc-cpp-learning (My more complete work lives in private repos, but this one is for sharing the basics I test and learn.)

Here’s few resources took me months to find:

https://docs.oracle.com/javase/8/docs/technotes/guides/jni/spec/jniTOC.html

https://webrtc.googlesource.com/src/%2B/HEAD/modules/audio_device/g3doc/audio_device_module.md

https://gist.github.com/mysteryjeans/dfddbf73ab232fd3ef17c51d3b38433d

https://webrtc.github.io/webrtc-org/architecture/#webrtc-native-c-api

https://w3c.github.io/webrtc-pc/#simple-peer-to-peer-example

https://webrtc.googlesource.com/src/%2B/refs/heads/lkgr/api/g3doc/index.md

https://webrtc.googlesource.com/src/+/refs/heads/main/examples/androidnativeapi/jni